Rust Source-based Code Coverage

Support for LLVM-based coverage instrumentation has been stabilized in Rust 1.60.0.

If you have a previous version of Rust installed via rustup, you can get 1.60.0 with:

rustup update stable

To get the code coverage report in Rust, you need to generate profiling data (1) and then use LLVM tools to process (2) and generate reports (3).

(1) You can try this out to generate profiling data on your code by rebuilding your code with -Cinstrument-coverage, for example like this:

RUSTFLAGS="-C instrument-coverage" cargo build

$ ll *.prof*

default.profraw

Which will produce a default.profraw in the current directory,

if you have many binary or multiple projects, the file will be overwritten,

so please read the document here

to modify the output file name by using environment variables.

For example:

LLVM_PROFILE_FILE="profile-%p-%m.profraw" \

RUSTFLAGS="-C instrument-coverage" cargo build

For (2), the llvm-tools-preview component includes llvm-profdata for processing

and merging raw profile output (coverage region execution counts)

rustup component add llvm-tools-preview

$(rustc --print target-libdir)/../bin/llvm-profdata \

merge -sparse default.profraw -o default.profdata

$ ll *.prof*

default.profdata default.profraw

And also llvm-cov in the llvm-tools-preview component for report generation (3),

llvm-cov combines the processed output, from llvm-profdata, and the binary itself,

because the binary embeds a mapping from counters to actual source code regions:

$(rustc --print target-libdir)/../bin/llvm-cov \

show -Xdemangler=rustfilt target/debug/coverage-testing \

-instr-profile=default.profdata \

-show-line-counts-or-regions \

-show-instantiations

The annotated report will be like this:

1| 1|fn main() {

2| 1| println!("Hello, world!");

3| 1|}

All in one script for code coverage

Here is the script that constructs all the steps above, used by my most projects https://github.com/duyet/cov-rs

bash <(curl -s https://raw.githubusercontent.com/duyet/cov-rs/master/cov.sh)

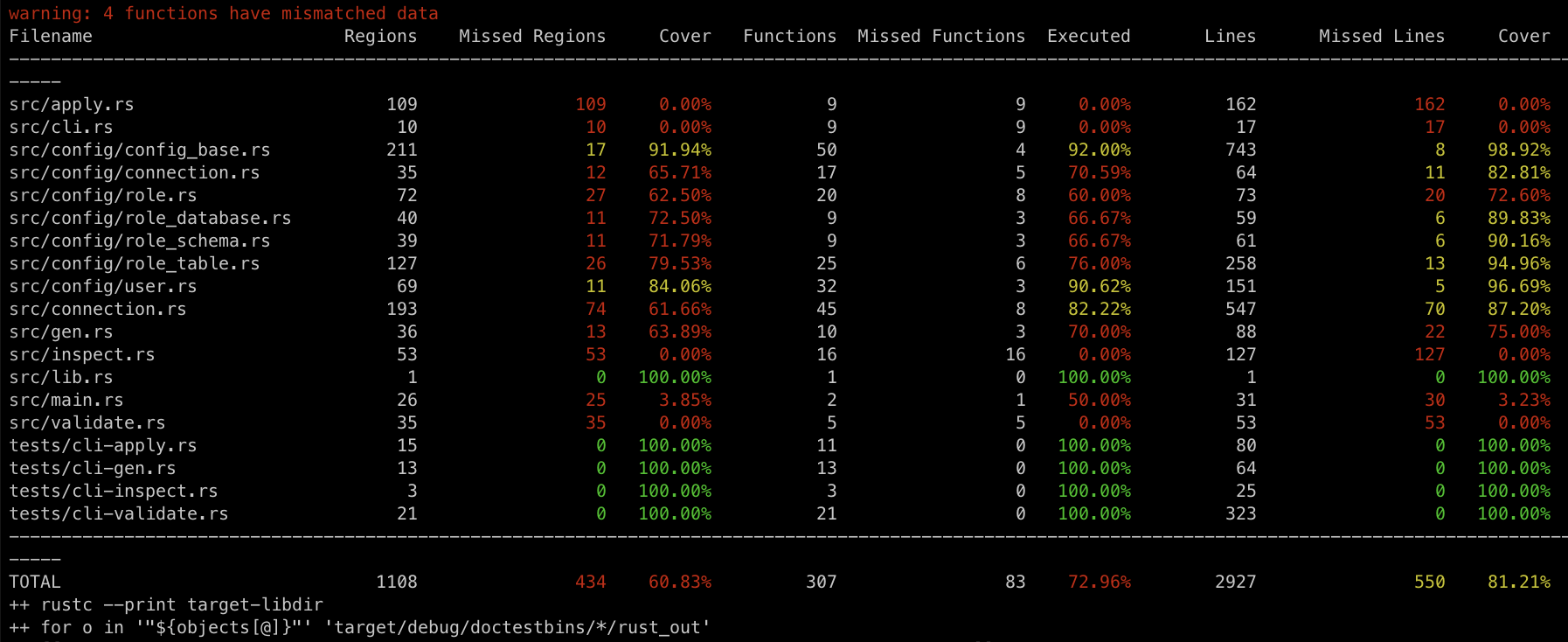

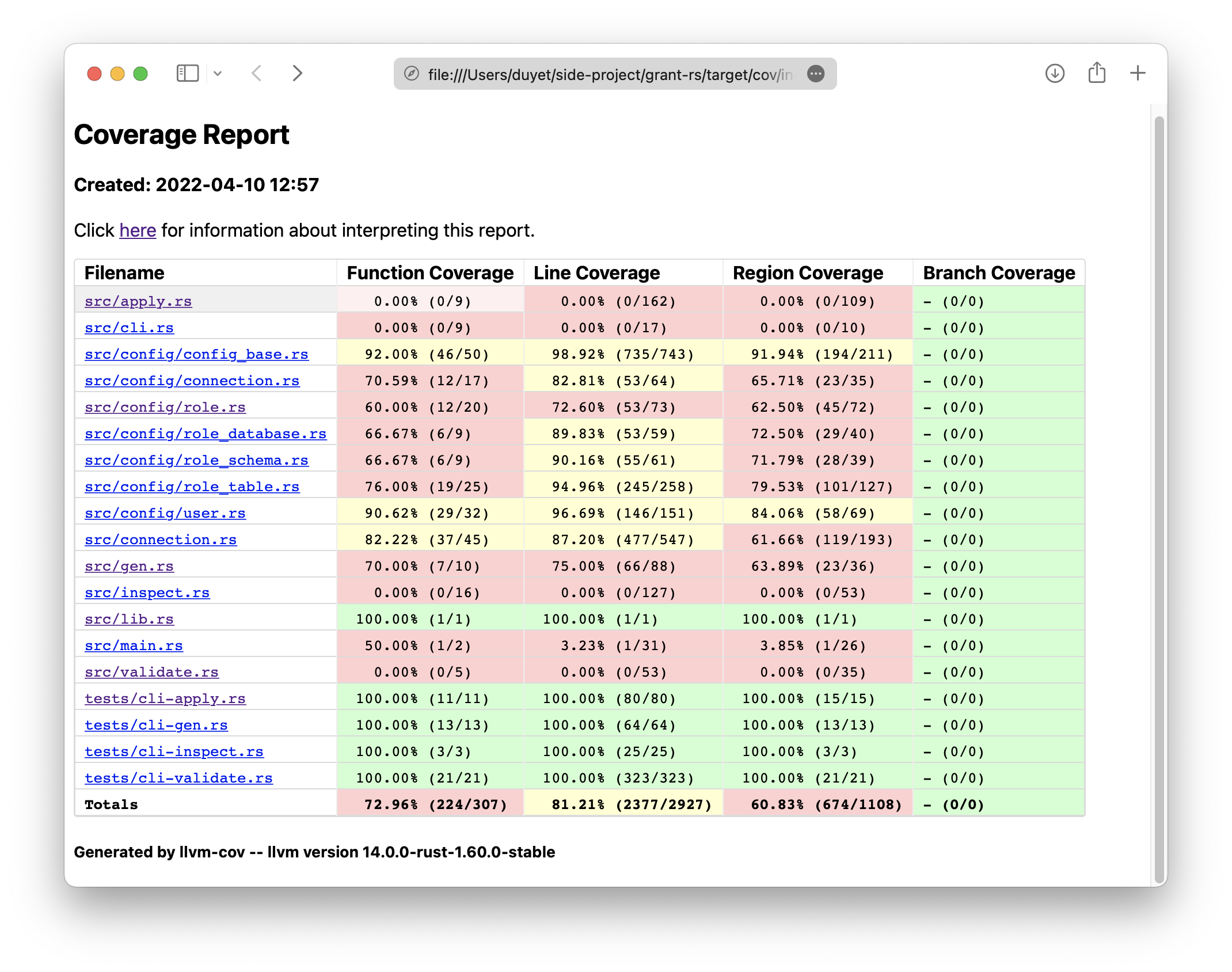

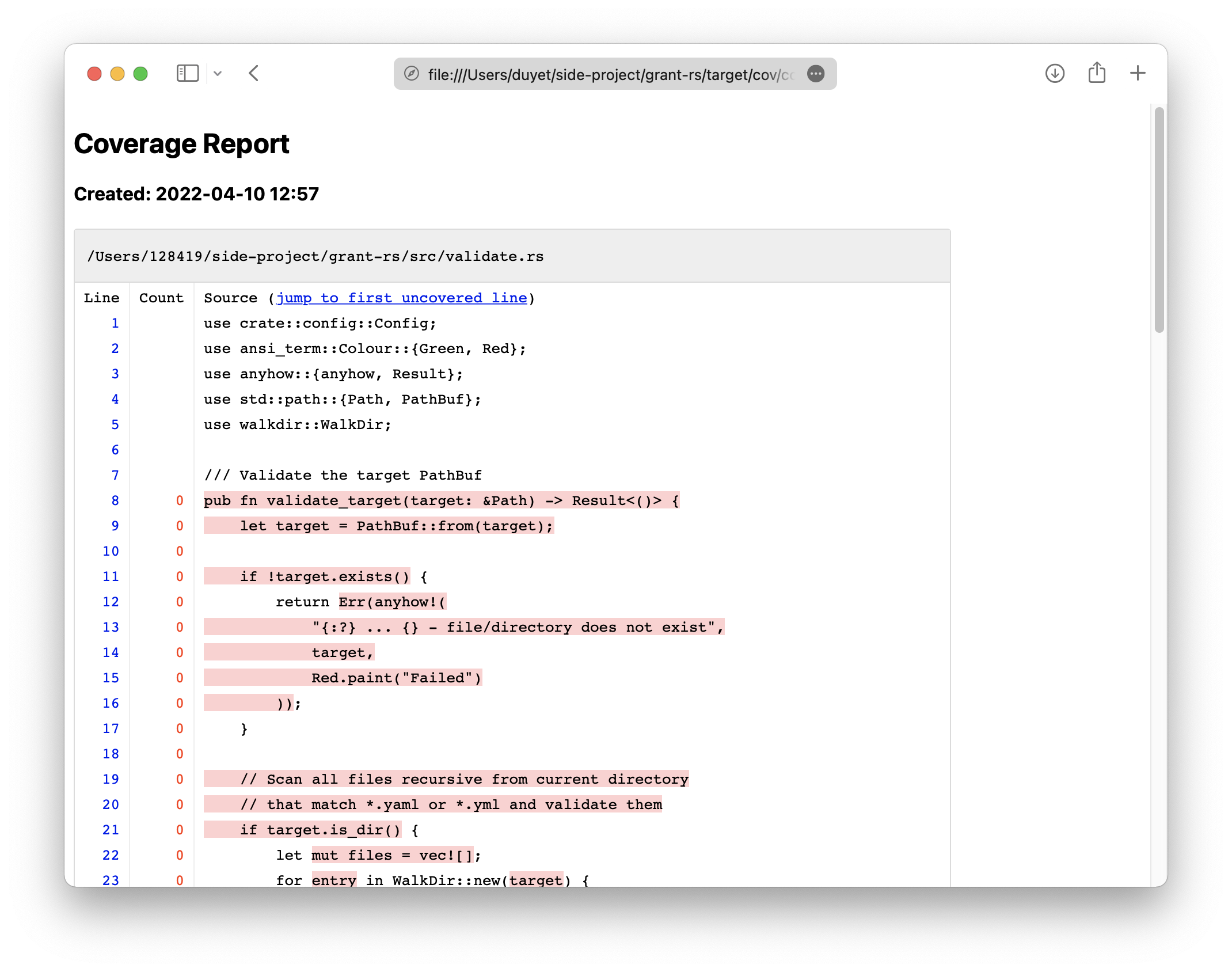

The script will install the necessary crates, run the unit test to generate profraw,

merge into profdata then render the report in terminal and HTML. It also supports workspace,

able to comment

the report to Github pull requests as well.

References

Related Posts

Apache OpenDAL in Rust to Access Any Kind of Data Services

OpenDAL is a data access layer that allows users to easily and efficiently retrieve data from various storage services in a unified way such as S3, FTP, FS, Google Drive, HDFS, etc. They has been rewritten in Rust for the Core and have a binding from many various language like Python, Node.js, C, etc..

Fossil Data Platform Rewritten in Rust 🦀

My data engineering team at Fossil recently released some of Rust-based components of our Data Platform after faced performance and maintenance challenges of the old Python codebase. I would like to share the insights and lessons learned during the process of migrating Fossil's Data Platform from Python to Rust.

Rust Data Engineering: Processing Dataframes with Polars

If you're interested in data engineering with Rust, you might want to check out Polars, a Rust DataFrame library with Pandas-like API.

Cargo: Patch Dependencies

There are several scenarios when you will need to override or patch upstream dependencies. Like testing a bugfix of your crates before pushing to crates.io, a non-working upstream crate has a new feature or a bug fix on the master branch of its git repository that you'd want to try, etc. In these cases, the [patch] section of Cargo.toml might be useful.